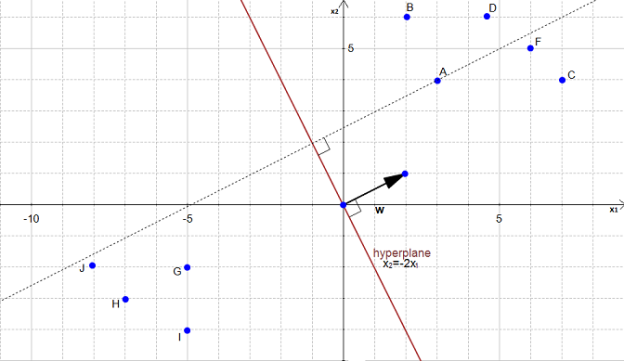

I find that excel is a great pedagogical tool and hope you agree with me and find the toy model interesting. I borrow heavily from the authors that I will mention at the end of the post so to add some original content I implement SVM in an excel spreadsheet using Solver. In a final post I will discuss what is commonly referred to as the kernel trick to handle non linear decision boundaries. In a second post I will discuss a soft margin SVM.

#Svm hyperplan series

This will be a three part series with today’s post covering the linearly separable hard margin formulation of SVM. I decided to check out some other online content on SVM and have a stab at presenting the material in a style that is friendly to newbies. I hate to be critical of these two courses since overall they are amazing and I am very grateful to the instructors for making this content available for the masses. Statistical Learning by Trevor Hastie and Rob Tibshirani was an amazing course but unfortunately SVM was treated somewhat superficially. Andrew Ng watered down the presentation of SVM classifiers compared to his Stanford class notes but he still treated the subject much better than his competitors. Both courses miss the mark when it comes to SVM in my opinion. I should mention that there are two exceptions, Andrew Ng’s Machine Learning on Coursera and Statistical Learning course on Stanford’s Lagunita platform.

It’s a shame really since other popular classification algorithms are covered.

The one weakness so far is the treatment of support vector machines (SVM). I have been on a machine learning MOOCS binge in the last year.